The cost of not protecting privacy

22 October, 2019 The cost of not protecting privacy

The cost of not protecting privacyThe apparent cost of protecting data privacy as many people thinks is the loss of the utility of the data. Thus, it is frequently seen as an additional burden for data owners or processors.

But what is the cost of not protecting it?

To reflect on the consequences of a lack of privacy protection, I will present some well-known re-identification examples that show that only removing the IDs while publishing the precise data is not actually anonymizing it.

Big data vs privacy or big data with privacy?

The improvement of technologies for sensing, storing and processing huge volumes of data, has fueled the development of data mining, Machine learning, and Artificial intelligence.

These technologies frequently use big data to better train their algorithms and improve their precision on classification and prediction tasks.

However, collecting massive amounts of personal information for classification and profiling, may have some drawbacks. The first, more evident, is that apparently non-identifying information may in fact be used for re-identification.

Constantly monitoring the (online and offline) whereabouts of an individual may reveal her schedule, the places she visits, which in turn, may reveal her religious beliefs, sexual preferences and political opinions, among other information that she may prefer to keep private.

Also, very accurate profiling may allow for micro-targeted advertising and potentially manipulate individual preferences, together with the additional problems of AI bias or algorithmic bias.

Under a purely big data perspective, more precise and more information is better. On the other hand, the more precise information is available about the same person, the less her privacy is preserved.

Thus, it seems that the principles of big data are opposed to the privacy design strategies of minimizing the information processed, and that the information should be kept at the highest level of aggregation with the least possible detail in which it is still useful.

The amount of privacy gained is not necessarily the amount of utility lost nor the other way around (i.e., it is not a zero-sum game), there are techniques that allow for keeping a balance among these two seemingly opposed objectives. It is possible to have big data with privacy, though it is challenging.

Privacy and anonymity in the digital world

The most common notion of anonymous comes from its Greek etymology anonymous (ανώνυμος), which means without a name.

So, it is interesting to look at the definitions of privacy and anonymity from Merriam-Webster dictionary.

Privacy:

- a: The quality or state of being apart from company or observation.

- b: Freedom from unauthorized intrusion.

Anonymous:

- of unknown authorship or origin.

- not named or identified.

- lacking individuality, distinction, or recognizability.

We may wonder what the meaning of these definitions in the digital world is, when we are in the solitude of our home or office, and our computer interactions are being recorded in some database.

Or, while we are moving through the city with a camera, a microphone and a GPS in our phones, while some apps may have access (with our consent) to our locations among other data.

Next, I will present a few cases of anonymous data publishing and how they were re-identified.

Governor’s Weld re-identification

In 1997, Latanya Sweeney a graduate student in computer science at MIT at that time, published a paper proving that 87% of all Americans are uniquely identified by ZIP code, birthdate, and sex.

She had obtained the hospital data that the Massachusetts Group Insurance Commission (GIC) had released for the purpose of improving healthcare.

The Massachusetts Governor at that time William Weld, assured that GIC had protected patient privacy by deleting direct identifiers.

Coincidentally, in May 18, 1996, William Weld was to receive an honorary doctorate degree from Bentley College and to give the keynote graduation address, when he vanished before the audience.

Sweeney bought a voter list database (for 20$) which contained, the name, address, ZIP code, birth date, and sex of every voter. Then, linked this data to the published GIC data, found Governor Weld’s health records and sent them to his office.

This case of re-identification has influenced the development of US health privacy policy. Namely, the privacy protections from Health Insurance Portability and Accountability Act of 1996 (HIPAA).

AOL re-identification

In 2006 New York times reporters followed the queries of user 4417749 among the 20 million Web search queries of more than 650,000 members that had been recently collected and published by AOL.

They found out that user 4417749 was a 62-year-old widow with three dogs who lived in Lilburn, Ga. She was looking for “60 single men” and solutions for a “dog that urinates on everything”, among other queries.

When the reporters presented the list of queries from user 4417749 to Thelma Arnold, and asked if they were hers, she was shocked to hear that AOL had saved and published three months of them.

“My goodness, it’s my whole personal life,” she said. “I had no idea that somebody was looking over my shoulder.”

Then when they asked for the reasons of different queries, such as “nicotine effects on the body,” she explained: “I have a friend who needs to quit smoking and I want to help her do it”, also that she frequently searched for medical conditions for her friends to help them.

She concluded that she would drop her AOL subscription.

Two AOL employees were fired, and the chief technology officer resigned.

AOL was sued, accused of violating the Electronic Communications Privacy Act and of fraudulent and deceptive business practices, it was asked for at least $5,000 for every person whose search data was exposed.

Netflix prize re-identification

Two months after AOL released anonymous search-engine logs, on October 2, 2006 Netflix launched a contest, offering 1 million dollars to the team that improved their current recommender system Cinematch by at least 10%.

It was awarded on September 21, 2009 to the team “BellKor’s Pragmatic Chaos”, a team that was the result of merging some teams (BigChaos, BellKor and Pragmatic Theory) to achieve the target improvement.

The following question and answer are still in the FAQs section of Netflix prize:

Is there any customer information in the dataset that should be kept private?

No, all customer identifying information has been removed; all that remains are ratings and dates… Even if, for example, you knew all your own ratings and their dates you probably couldn’t identify them reliably in the data because only a small sample was included (less than one-tenth of our complete dataset) and that data was subject to perturbation. Of course, since you know all your own ratings that really isn’t a privacy problem is it?

Few weeks after the contest began, Arvind Narayanan and Vitaly Shmatikov from University of Texas identified several Netflix users by comparing their reviews in the Netflix data to ones posted on the Internet Movie Database website.

On December 17, 2009, four Netflix users filed a class action lawsuit against Netflix, alleging that Netflix had violated US fair trade laws and the Video Privacy Protection Act by releasing the datasets.

The suit sought more than $2,500 in damages for each of more than 2 million Netflix customers.

On March 12, 2010, Netflix cancelled a second 1M$ Prize competition that had announced the previous August.

NYC taxis re-identification

Chris Whong requested and published the data of 173 M of NYC taxi trips, with pickup and drop-off location and time. He was able to do so, by the Freedom of Information Law, in his words the computer-illiterate grandmother of Open Data.

It was supposed that the plate numbers where “anonymized”, by using a hash function (MD5).

Considering that the number of possible combinations on NYC plates is around 19M, Panduragan found out a way of “de-anonymizing them”.

This joke may give you a hint of how he obtained the plate numbers from their hashes.

However, Panduragan admits:

“As always, things are more complicated than they seem at first. The strategies described above will anonymise the numbers of the licenses and taxicabs, but commenters have pointed out that there are a number of other ways in which PII may be reconstructed.”

For example, atockar:

- Queried the pickups after midnight and before 6am, around some famous “gentlemen’s” club location.

- Mapped the drop-off coordinates.

- Found clusters and examined one of the addresses of a cluster.

- Queried other drop-offs from such location and at similar timestamps to found out that this gentleman also frequented similar establishments.

- Finally, used social networks to find out his property value, ethnicity, relationship status, court records and a profile picture.

Privacy as a negative right

Privacy as freedom from unauthorized intrusion, may be understood as having a space of no interference, a space of no influence nor inference. But in the digital world, the space is different.

Cambridge Analytica

Michal Kosinski showed that is possible to use Facebook users Likes to generate quite accurate profiles, and predict sensitive personal attributes such as sexual orientation, ethnicity, religious and political views, personality traits, intelligence, happiness, use of addictive substances, parental separation, age, and gender.

He had to write a letter clarifying that he was not related to Cambridge Analytica scandal, in which

More than 87 million Facebook users’ personal information was compromised.

This event brought Facebook’s CEO Mark Zuckerberg to testify before the US congress.

Facebook’s was recently fined by the Federal Trade Commission (FTC) for $5 billion for various privacy violations. It is the largest fine imposed by the FTC; however, Facebook’s made $5 billion in profits in the three first months of last year.

To put this on human scale, this is equivalent for someone who earns the minimum wage in Spain to be fined for washing or fixing a car in the street.

This may explain the opinion that:

“Companies are free today to monitor Americans’ behavior and collect information about them from across the web and the real world to do everything from sell them cars to influence their votes to set their life insurance rates — all usually without users’ knowledge of the collection and manipulation taking place behind the scenes.”

In contrast, under Europe’s General Data Protection Regulation (GDPR) data subjects should be informed whenever personal information is processed, they should have agency over the processing of their personal information.

A privacy policy compatible with legal requirements should be enforced and the data controller should be able to demonstrate compliance with the privacy policy and legal requirements. The amount of personal information processed should be minimal, hidden from plain view. Processing should be done in distributed fashion whenever possible and personal information should be processed at the highest level of aggregation with the least possible detail in which it is still useful.

Probably, many Americans are being indirectly protected by the GDPR, since the biggest global tech companies everywhere must comply with such European rules.

Open data with privacy

I will just give a final example of privacy protection in the cities: the Berkman Klein center for internet & society publication on open data privacy. There, you may find processes for protecting privacy to be adopted by cities when publicly releasing data.

Some of the advices are that:

- Cities should conduct risk-benefit analyses for the design and implementation of open data programs, assume that there are legal and ethical obligations, and inevitable risks.

- Privacy should be considered at each stage of the data lifecycle of collection, maintenance, release, and deletion. Operational structures and processes to codify privacy management should be developed widely throughout the City.

- Processes and toolkits of data-sharing strategies beyond the binary open/closed distinction should be documented.

- Public engagement and priorities should be the essential aspects for data management. The expected benefits, privacy risks and measures to protect privacy, should be shared.

They conclude that it is a key responsibility of becoming data-driven to protect the individuals represented in data, and remind us that computer scientists are still developing our understanding of the bounds of data privacy, but also that cities will benefit from explicit instructions for mitigating privacy risks that balance benefit and risk based on our current knowledge of effective approaches.

It is indeed true that computer scientists are developing and understanding the bounds of data privacy.

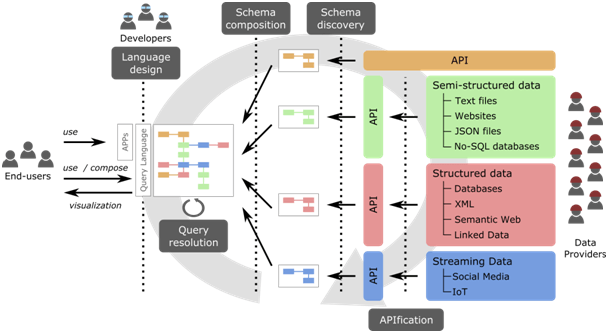

At KISON group we are currently carrying out the CONSENT research project “GDPR-compliant CONSumer oriENTed IOT” for providing cost-effective security and privacy technologies to guarantee the data protection levels established by the GDPR in the context of IOT.

I have been discussing examples of failures of privacy protection, but it is important to remark that in all of them the data published was anonymous (ανώνυμος), so it was not protected.

The same happens with security, just to check: how many cases can you remember when privacy/security has worked fine, and how many cases when it has failed?

The costs I have been mentioning here are direct costs of not protecting privacy.

Perhaps it may be more important to ask ourselves what may be the cost of not having privacy?

Julián Salas

Postdoctoral researcher at KISON